Congratulations! In April 2022, NLP's one paper is accepted by NAACL 2022! The full name of NAACL 2022 is the 2022 Annual Conference of the North American Chapter of the Association for Computational Linguistics - Human Language Technologies (NAACL-HLT 2022), which is the North American Chapter of ACL, one of the top conferences in the field of natural language processing. NAACL 2022 will be held in Seattle, USA, July 10-15, 2022.

The accepted paper is summarized as follows:

- One Reference Is Not Enough: Diverse Distillation with Reference Selection for Non-Autoregressive Translation (Chenze Shao, Xuanfu Wu, Yang Feng)

- NAACL Main Conference, long paper

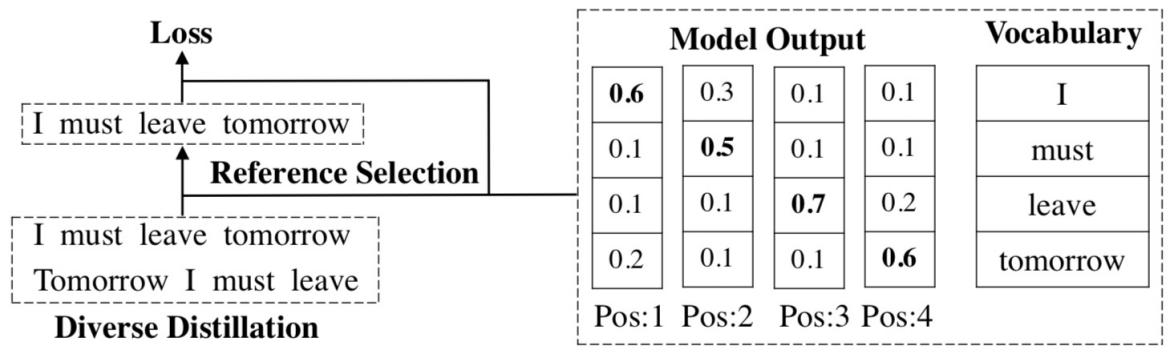

Abstract: Non-autoregressive neural machine translation (NAT) suffers from the multi-modality problem: the source sentence may have multiple correct translations, but the loss function is calculated only according to the reference sentence. Sequence-level knowledge distillation makes the target more deterministic by replacing the target with the output from an autoregressive model. However, the multi-modality problem in the distilled dataset is still nonnegligible. Furthermore, learning from a specific teacher limits the upper bound of the model capability, restricting the potential of NAT models. In this paper, we argue that one reference is not enough and propose diverse distillation with reference selection (DDRS) for NAT. Specifically, we first propose a method called SeedDiv for diverse machine translation, which enables us to generate a dataset containing multiple high-quality reference translations for each source sentence. During the training, we compare the NAT output with all references and select the one that best fits the NAT output to train the model. Experiments on widely-used machine translation benchmarks demonstrate the effectiveness of DDRS, which achieves 29.82 BLEU with only one decoding pass on WMT14 En-De, improving the state-of-the-art performance for NAT by over 1 BLEU.